A large language model (LLM) is an AI system trained on vast amounts of text to understand and generate human language. Unlike traditional software that follows fixed rules, LLMs learn statistical patterns from data, enabling them to write, summarize, translate, answer questions, and reason across complex topics. In 2025, the leading LLMs — GPT-4, Gemini, Claude, and 5 others — differ significantly in reasoning depth, context length, cost, and the tasks they handle best.

To make the right choice, it helps to compare LLM models side by side. In this article, we explain the top LLM models 2025 in a clear, accurate way so you can see how they are built and where they perform best.

Key Takeaway

- No single LLM wins across all tasks — GPT-4 leads in general reasoning, Gemini in long-context and multimodal, Claude in safety-critical and instruction-following tasks, and LLaMA 3 for teams needing open-source control.

- Context window size matters more than parameter count for enterprise use — Gemini 1.5 Pro’s 1M token window makes it uniquely suited for document-heavy workflows.

- Open-source vs. closed is a cost and control decision — LLaMA 3, Mistral, and DeepSeek are free to use but require your own infrastructure; GPT-4 and Claude trade cost for convenience and reliability.

- For customer service specifically, RAG-optimized models like Cohere Command R+ outperform general-purpose models because they retrieve from your own knowledge base rather than generating freely.

- Pricing models diverge sharply at scale — per-token pricing on GPT-4 Turbo and Claude compounds quickly at high volume; open-source models become cheaper above a threshold that’s worth calculating before committing.

What is an LLM? The Relationship Between AI and LLMs

Large language models (LLMs) are a major part of today’s AI systems. They are trained on large amounts of text to understand and generate human-like language. But before we compare LLM models, you have to understand what they are and how they relate to AI.

What is an LLM in AI?

LLMs are a type of artificial intelligence designed to work with language. They do not think or understand like humans, but can predict and generate text based on patterns they have learned. This allows them to answer questions, write emails, summarize documents, translate languages, and more.

When people ask what is an LLM in AI, they mean models like GPT, Claude, or Gemini that can process and produce text. These models use machine learning to get better over time and can also be adjusted for special tasks.

AI LLM and Where It Is Used

An AI LLM shows how language models are part of the larger AI world. Some are free to use, while others are paid. They are used in many areas, such as:

- Chatbots that reply to customer queries with top customer service support

- Writing tools that help with emails or reports

- Code assistants that suggest or fix code

- Virtual tutors who explain lessons or solve problems

- Search tools that give better answers from websites or files

Doing a proper LLM model comparison means looking at speed, cost, and how well the model fits the task. Since every model has different strengths, you need to compare LLM models based on your daily needs. Once you compare LLM models, you will be able to make better choices without guessing or relying on broad claims.

Read More:

What is Generative AI for Customer Service?

AI in Retail – 7 Strategies to Boost E-commerce Conversion Rate Growth

B2B vs. B2C E-Commerce: Differences in Success

A Review and Comparison of Popular AI Foundation Models in 2025

The global market for large language models is expected to grow from $1,590.03 million in 2023 to nearly $259,817.73 million by 2030, showing rapid growth in this field. With so many advanced tools now available, it is very beneficial to compare LLM models based on what they offer, how they perform, and where they are used.

1. OpenAI

OpenAI products are often listed among the best LLM models when people compare LLM models. OpenAI became well-known for its GPT series, starting with GPT-3 in 2020. This model had 175 billion parameters and could generate text, translate languages, and answer questions.

GPT-3 was part of the early list of LLM models, though it showed limitations in deep, multi-step reasoning. In 2022, GPT-3.5 was launched with better accuracy and speed. It is still widely used today, especially in the free version of ChatGPT.

GPT-4 Series and Performance

In 2023, GPT-4 was released with better reasoning, coding, and safety. GPT-4 Turbo followed with faster replies and cheaper use. These models lead in LLM model evaluation and are strong in multimodal tasks.

Pricing: GPT-4 Turbo is available in ChatGPT Plus at $20/month. Business APIs are priced separately.

Current features include (some still in testing):

- Advanced voice capabilities

- Deep Research (online multi-step research)

- GPT-4.5 and Operator previews

- o3 Pro mode for high-level answers

- Sora for video generation

- Codex Agent for coding tasks

OpenAI stands out in LLM model training and remains a top choice for everyday and advanced use.

2. Google Gemini (DeepMind)

Gemini, which is built by DeepMind and released by Google, is a group of advanced large language models focused on logic, math, and multimodal understanding. It now powers many Google services after replacing Bard in 2024.

Model Versions and Capabilities

Gemini comes in three types:

- 5 Pro – Strong at long-context tasks (up to 1 million tokens).

- 5 Flash – Lightweight and fast, for high-speed tasks.

- Nano – Works offline on devices like the Google Pixel.

It ranks at the top on the LLM leaderboard, scoring close to GPT-4 in coding and reasoning tasks, and is included in almost every comparison of LLM models.

Usage and Pricing

Gemini Advanced (1.5 Pro) is part of the Google One AI Premium Plan at $19.99/month.

A basic free version is also available for general tasks. It integrates with Gmail, Docs, and other Workspace tools for better productivity.

3. Anthropic Claude

Claude is an LLM developed by Anthropic. It is designed to be safer and more aligned with human goals. Claude is known for its calm, thoughtful responses and strong performance in complex tasks.

Early Versions of Claude

The first versions, like Claude 1 and Claude 2, became well known during early LLM comparisons. Claude 2.1 was released in late 2023 and could process up to 200000 tokens at once. It handled writing, summaries, and complex tasks well.

These early versions were also known for being polite, helpful, and less likely to give false replies. Claude became a trusted model for tasks that required accurate answers.

Latest Versions of Claude

Now, in 2025, Claude Sonnet 4 and Claude Opus 4 are the latest specialized LLM models. Released in May, they are much stronger than earlier versions. They are used for deep work like research, coding, and legal analysis. Claude models are still popular when people compare LLM models, mostly for staying safe and closely following instructions.

Important Features

- Works on web, iOS, Android, and desktop

- Writes, edits, and analyzes content

- Connects with Google tools like Gmail and Calendar

- Claude Code helps with data and coding tasks

Pricing

Claude has a free plan with basic features like writing, coding, and web access. The Pro plan costs $17 monthly (yearly billing) or $20 (monthly billing) and adds tools like Claude Code and Google integration. The Max plan starts at $100 per user per month for advanced features.

4. Amazon Bedrock

Amazon Bedrock is a service that allows access to different LLMs developed by various companies. For those who want flexibility, Amazon Bedrock offers a way to compare LLM models and choose what suits their work best without much complexity. Instead of building your own model, you can choose from several options available on this platform.

This includes models like Claude, Jurassic, and Amazon’s own Titan. It is useful for businesses that want to use language tools in their applications without developing them from scratch.

Main Features

It supports text creation, question answering, and other writing tasks. You only pay for what you use. Bedrock does not require you to handle the technical setup or maintenance of the models.

Pricing

There is no fixed monthly fee. The cost depends on how many words you process or how much time you spend using the model. Different models on Bedrock have different rates.

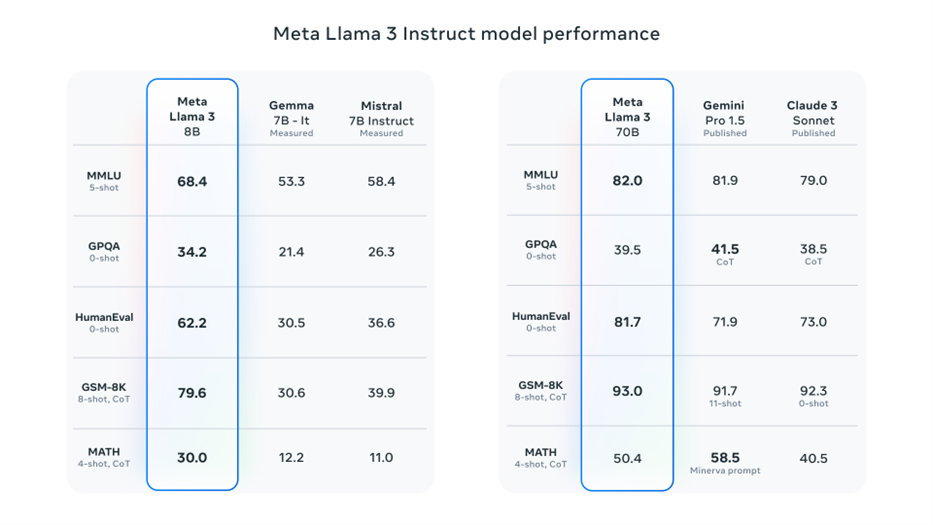

5. Meta LLaMA 3

Meta LLaMA 3 is a group of large language models that are freely available for research and commercial use. It was developed by Meta and is offered in two main versions with different sizes. These models are designed to perform well on tasks such as writing, coding, and answering complex questions.

Main Features

LLaMA 3 models are open access and can be downloaded directly. You can run them on your own computer if you have strong enough hardware. These models are suitable for those who want control over how the model is used or want to build their own tools.

Usage and Costs

There is no official pricing because Meta does not charge for the model itself. However, you will need to invest in proper hardware. Running the model also requires time and energy.

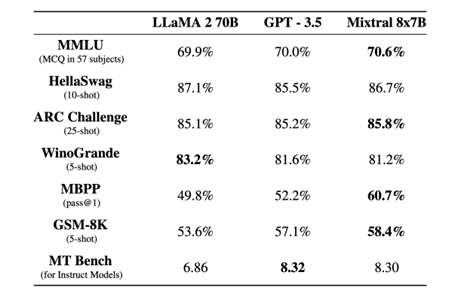

6. Mistral (Mixtral 8x7B)

Mistral AI has developed open-weight large language models focused on speed, openness, and code generation. It gained recognition for publishing powerful models without restricting access, positioning itself as a transparent alternative, which has made it popular when compared to LLM models.

Model Evolution and Performance

The first release, Mistral 7B, was followed by Mixtral 8x7B, a mixture-of-experts model that outperforms LLaMA 2 and GPT-3.5 in many benchmarks. These models are optimized for multi-tasking and cost-efficiency, especially in coding and reasoning.

- Mistral 7B: Dense model, released openly in 2023

- Mixtral 8x7B: MoE architecture, only 2 experts active per input

- Beats LLaMA 2 70B on multiple tasks

- Designed to be fast and memory-efficient

- Strong performance in code, translation, and logic

Pricing Options

Mistral has flexible pricing. The Hero plan is completely free and gives access to basic AI tools. The Pro plan costs $14.99 per month and unlocks longer conversations and more advanced features.

For teams, the Team plan is $24.99 per user monthly and adds collaborative functions.

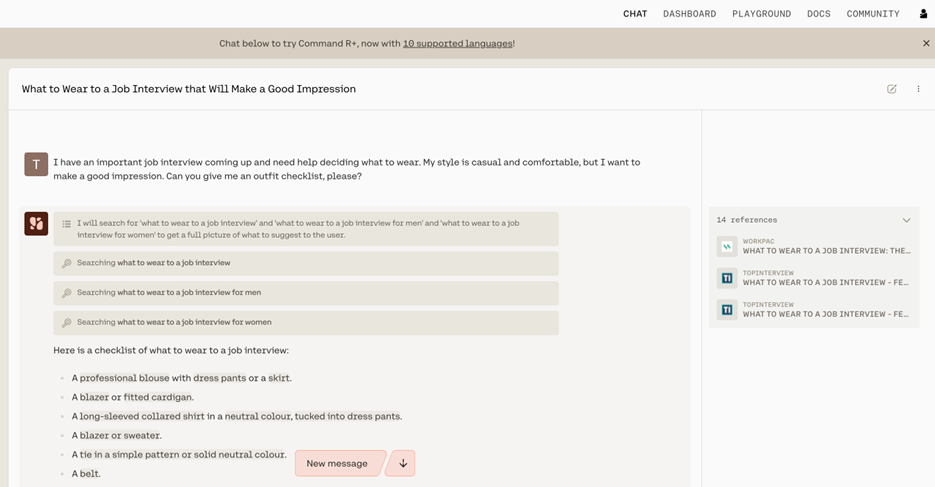

7. Cohere Command R+

Cohere is a Canadian company that provides powerful language models specifically designed for business needs. Their flagship models, Command R and Command R+, massively excel at tasks like summarization, long-form Q&A, and generating responses based on external information using retrieval-augmented generation (RAG).

Pricing of Command R+

- Input Tokens: $3 per million

- Output Tokens: $15 per million

- Deployment: Available via API, Cohere’s platform, or open-source platforms

- Support: Free testing tier available; enterprise support on request

When you compare LLM models for RAG and enterprise use, Command R+ is a strong open alternative to models like GPT-4 or Claude 3. It delivers competitive results in grounded generation tasks and supports easy integration with private or hybrid cloud systems.

8. Deepseek

DeepSeek is a series of models developed for scalable use in enterprise and research settings. It supports a large context window and handles both text and code tasks with accuracy. The model is optimized for Chinese and English, offering versatility across languages. It is quite often added to the lists of companies that compare LLM models.

Features

- Supports up to 32K context length

- Trained on 2 trillion tokens

- High performance in coding, math, and language reasoning

- Open weights with fine-tuning options

Cost & Access

Standard pricing is around:

- $0.27 per 1 million input tokens

- $1.10 per 1 million output tokens

DeepSeek is much more suited for multilingual applications, advanced content generation, and programmatic tasks such as code completion and debugging.

Table: Some Parameters of the 8 AI LLMs

| Model | Company | Parameters | Context Length | Open-Source | Notable Feature |

| GPT-4 | OpenAI | Not disclosed | 128k (GPT-4-turbo) | No | Strong reasoning & broad usage |

| Gemini 1.5 Pro | Google DeepMind | Not disclosed | 1M | No | Massive context, multimodal |

| Claude 4 Opus | Anthropic | Not disclosed | 200k | No | High safety alignment |

| Amazon Bedrock (Titan) | Amazon | Not disclosed | Varies | No | Unified API access to many models |

| LLaMA 3 (70B) | Meta | 70B | 8k | Yes | Strong open-source contender |

| Mixtral 8x7B | Mistral | 12.9B active | 32k | Yes | Sparse mixture of experts |

| Command R+ | Cohere | 104B | 128k | Yes | Optimized for RAG & retrieval |

| DeepSeek-V2 (16B) | DeepSeek | 16B | 128k | Yes | Code & math-focused performance |

Other Similar Tools/Websites

Some other tools built on large language models include Pi.ai (by Inflection AI), Character.ai, and Replika. These apps focus more on personal conversations, emotional support, and roleplay rather than professional tasks. They are also based on powerful AI models but are designed for a much more casual or social experience.

If you are trying out different types of language models, trying apps like AI Dungeon, Chai, or Janitor AI can help. You can compare LLM models and find the one that would work well for the use you need.

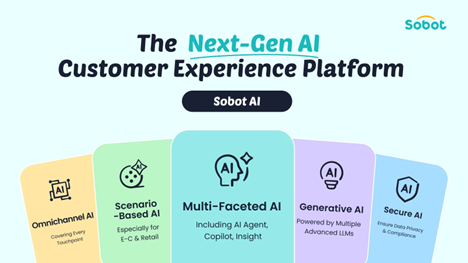

Sobot AI – Combining Multiple Advanced LLMs and SLMs

As a provider of advanced AI solutions focused on customer service, Sobot is powered by leading LLMs such as OpenAI, Claude, Amazon Bedrock, and DeepSeek. The exceptional generative AI capabilities can decompose large knowledge bases into searchable data, retrieve the most relevant information, and intelligently generate precise responses. Sobot AI also integrates specialized Small Language Models (SLMs) tailored to industry-specific scenarios, further enhancing service accuracy and professionalism.

Enterprises seeking to elevate customer engagement or optimize operational management can trust our omnichannel customer support solutions.

Conclusion

LLMs are changing fast. Knowing how to compare LLM models helps you pick the right one for your work. Each model is better at different things, like writing, answering questions, solving math problems, or helping your customers.

Sobot uses top models like OpenAI, Claude, Amazon Bedrock, and Deepseek to help businesses improve customer service and manage communication across many channels. If you want to make your work easier and see how these models can help, try Sobot today (Free Demo) and discover what it can do.

FAQs

What is the most accurate LLM in 2025?

Accuracy depends on task type. For general reasoning and coding, GPT-4 Turbo and Claude Opus 4 consistently rank highest on independent benchmarks like the LMSYS Chatbot Arena. For long-document analysis, Gemini 1.5 Pro’s 1 million token context window gives it a structural advantage. For retrieval-based tasks where the model answers from your own knowledge base, Cohere Command R+ outperforms general-purpose models. If a single recommendation is needed: Claude Opus 4 is the safest choice when accuracy in high-stakes, instruction-sensitive contexts is the priority — legal, compliance, and financial service deployments in particular.

What is the cheapest LLM API for high-volume use?

DeepSeek-V2 is the most cost-efficient option at approximately $0.27 per million input tokens — roughly 15 times cheaper than GPT-4 Turbo. For teams willing to manage their own infrastructure, Meta LLaMA 3 and Mistral Mixtral 8x7B are free to run with no per-token charges at all. The important caveat: raw per-token price is misleading. A cheaper model that requires more tokens to reach the same answer quality may cost more overall. Teams processing over 5 million tokens monthly should model 12-month total cost across at least two providers before committing.

GPT-4 vs Claude vs Gemini — which should I choose?

Choose GPT-4 Turbo for broad, reliable general performance across writing, coding, and reasoning — it has the widest integration ecosystem and suits teams handling diverse tasks. Choose Claude Opus 4 for regulated or safety-sensitive environments where instruction-following accuracy and low hallucination risk are non-negotiable. Choose Gemini 1.5 Pro if your work involves very long documents or deep Google Workspace integration — its 1 million token context window is unmatched among closed models. For most enterprise customer service deployments, Claude and GPT-4 are the strongest starting points.

Which LLM is best for customer service automation?

For customer service, the architecture matters more than the model. A RAG-powered setup — where the LLM answers from your own knowledge base rather than generating freely — consistently outperforms any raw model deployed alone. Within that architecture, Cohere Command R+ is purpose-built for retrieval accuracy. Claude and GPT-4 handle complex multi-turn conversations and escalation logic best. For high-volume, cost-sensitive deployments, DeepSeek-V2 offers strong multilingual performance at significantly lower API cost. The difference between a 40% and 75% autonomous resolution rate comes from knowledge base quality and escalation design — not which LLM you pick.

What is the difference between open-source and closed LLMs?

Open-source LLMs — like Meta LLaMA 3, Mistral, and DeepSeek — release their model weights publicly so anyone can download, run, and fine-tune them without per-token fees. Closed LLMs — like GPT-4, Claude, and Gemini — are accessible only through a paid API; the weights are proprietary. The practical trade-off: open-source offers lower cost at scale, full data privacy, and deep customization, but requires your own infrastructure. Closed models are faster to deploy and generally stronger on complex reasoning, but introduce data residency considerations and ongoing API costs. For data-sensitive industries, open-source is increasingly the stronger long-term architecture.